Creating and configuring a PegaUnit test case for a strategy

After you configure a strategy with customer information, you can run a single test case to test your strategy against a record of customer information. You can then convert the test run to a test case that you manually run to compare the output of the strategy with the results that you expect the strategy to return. For example, you can test that a proposition is offered to the appropriate customer.

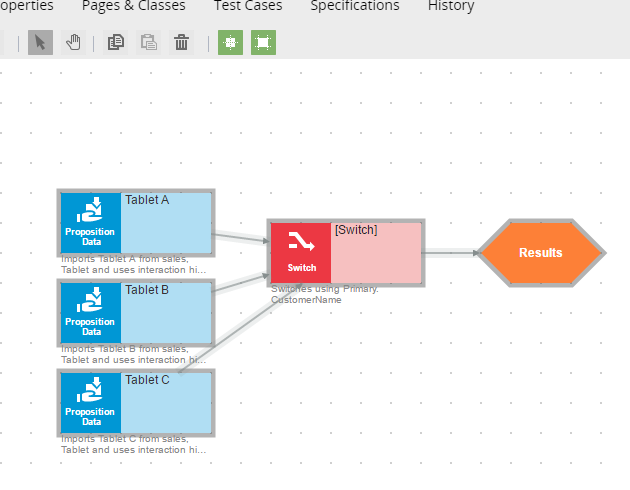

In the following example, a switch, which is a component that selects an issue or component, is configured so that one of the following propositions is offered:

- Tablet A proposition is offered if the customer credit status is Great

- Tablet B proposition is offered if the customer credit status is Good

- Tablet C proposition is offered otherwise

Example strategy

Example strategy

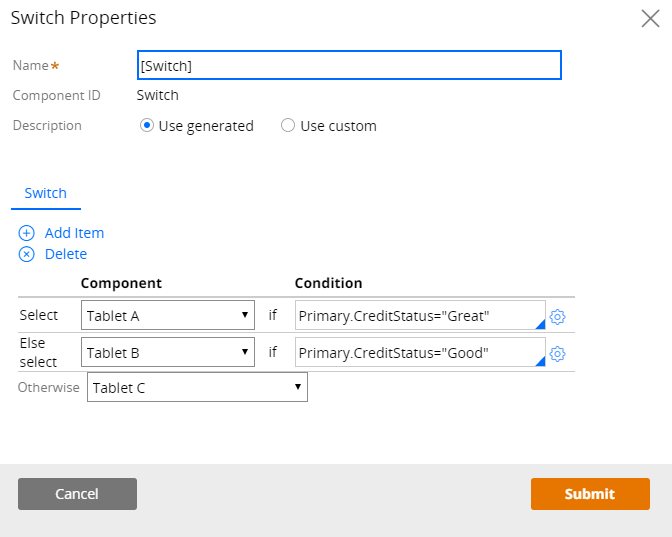

Switch configured to offer a proposition to the appropriate customer

Switch configured to offer a proposition to the appropriate customer

Creating a test case for a strategy

To create a test case for a strategy, complete the following steps:

- Open the strategy rule form.

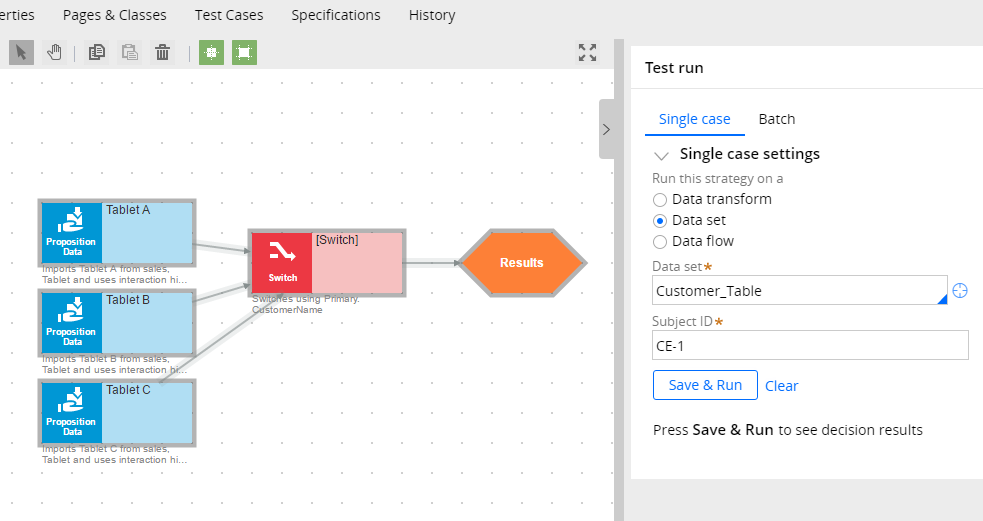

- Click the Arrow tab in the rule form to open the Test run pane, click the component on which you want to run the test, and enter the appropriate test data.

Test data in the Test run pane

Test data in the Test run pane

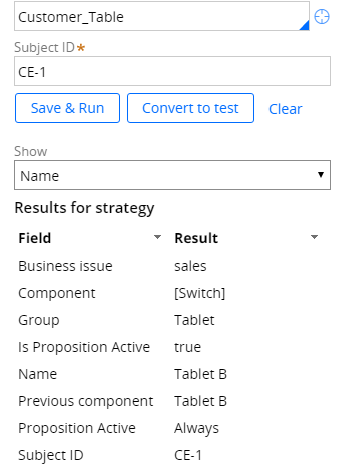

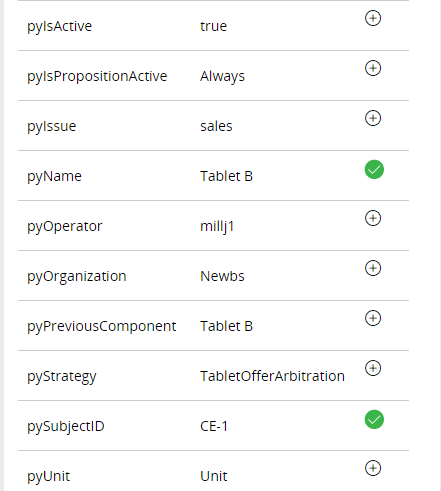

In this example, customer information is defined in the Customer_Table Data set field. CE-1, which is the Customer ID and key of the data set, is entered in the Subject ID field. The test runs on the Result component. Because the credit score for customer CE-1 is Good, Tablet B should be offered.

- Click Save & Run.

Returned test results

- Click Convert to test.

- In the Create Test Case form, click Create and open.

- Optional: Click the Gear icon in the Create Test Case form to rename the test case. As a best practice, give your test a name that identifies the purpose of the test case. For example, you could name this test case Tablet regression.

Note: Do not modify the context information, because the test case will not work.

- Add properties to the test case.

- In the Actual Results panel, select the properties or pages that you want to add. If you select a page, all the pages and properties that are on the page are added. The properties that you add are displayed in the Expected results pane.

In this example, you would expand the pxResults page, and then expand the pxResults(1) page, which contains all the results from the rule run. Next, you would select the pyName and pySubjectID properties, which are the two properties (proposition and CustomerID) that this strategy rule tests.

Selected properties in the Actual Results panel

Selected properties in the Actual Results panel

- Click Done. The Edit Test Case rule form is displayed and shows two parameters:

- componentName: Name of the component (for example, Switch) that you are testing.

- pzRandomSeed: Internal parameter, which is the random seed for the Split and Champion Challenger components. It is generated for all components but applies only to the Split and Champion Challenger components.

If you rename either the componentName or pzRandomSeed parameter, the test case will not return the results that you expect to see, for example:

- If you configure the test to run on a different component, the test might fail if a property is not found.

- If you change the pzRandomSeed value on Split or Champion Challenger shapes, the test fails.

- It is recommended that you delete the expected run-time assertion; otherwise the assertion might fail and show errors. The test does not fail, however, even if the assertion does.

- It is recommended that you delete the result count assertion. Modifying this value could result in the test failing.

- Click Save to save the rule form.

Opening and running test cases

You can open and run test cases on the Automated Testing landing page in Designer Studio and on the Test Cases tab of the strategy rule.

To access test cases from the Test Cases tab, complete the following steps:

- In the strategy rule form, click Test Cases.

- Select the check box next to the test that you want to run, and click Run selected. The Result pane indicates whether the test passed or failed.

- Click the result to open it.

The test passes if the expected output of all the properties matches the results that are returned by running the strategy rule.

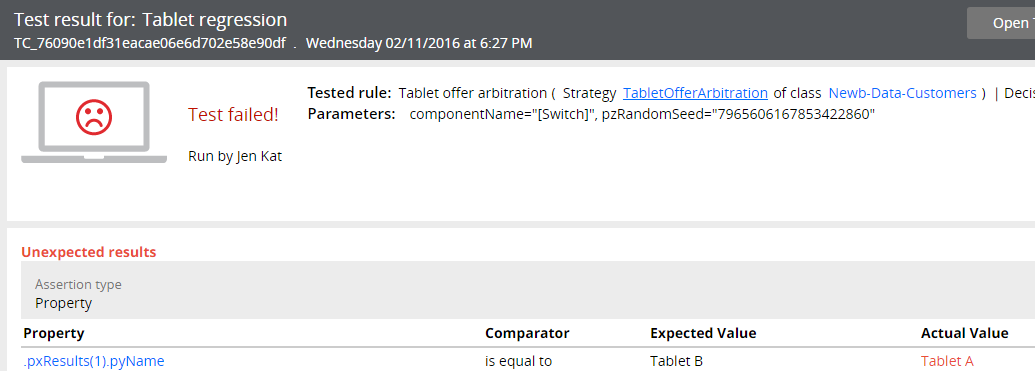

However, the test fails if the expected output of any property does not match the results that are returned. For example, if you changed the switch on the strategy to offer Tablet A if the credit score is Great and to offer Tablet B if the credit score is Good, the test fails. The expected result is Tablet B, but running the strategy rule returns Tablet A.

Failed test results

Failed test results

Previous topic Determining the next best action through decision strategies Next topic Creating test cases for strategy rules