Understanding MLOps

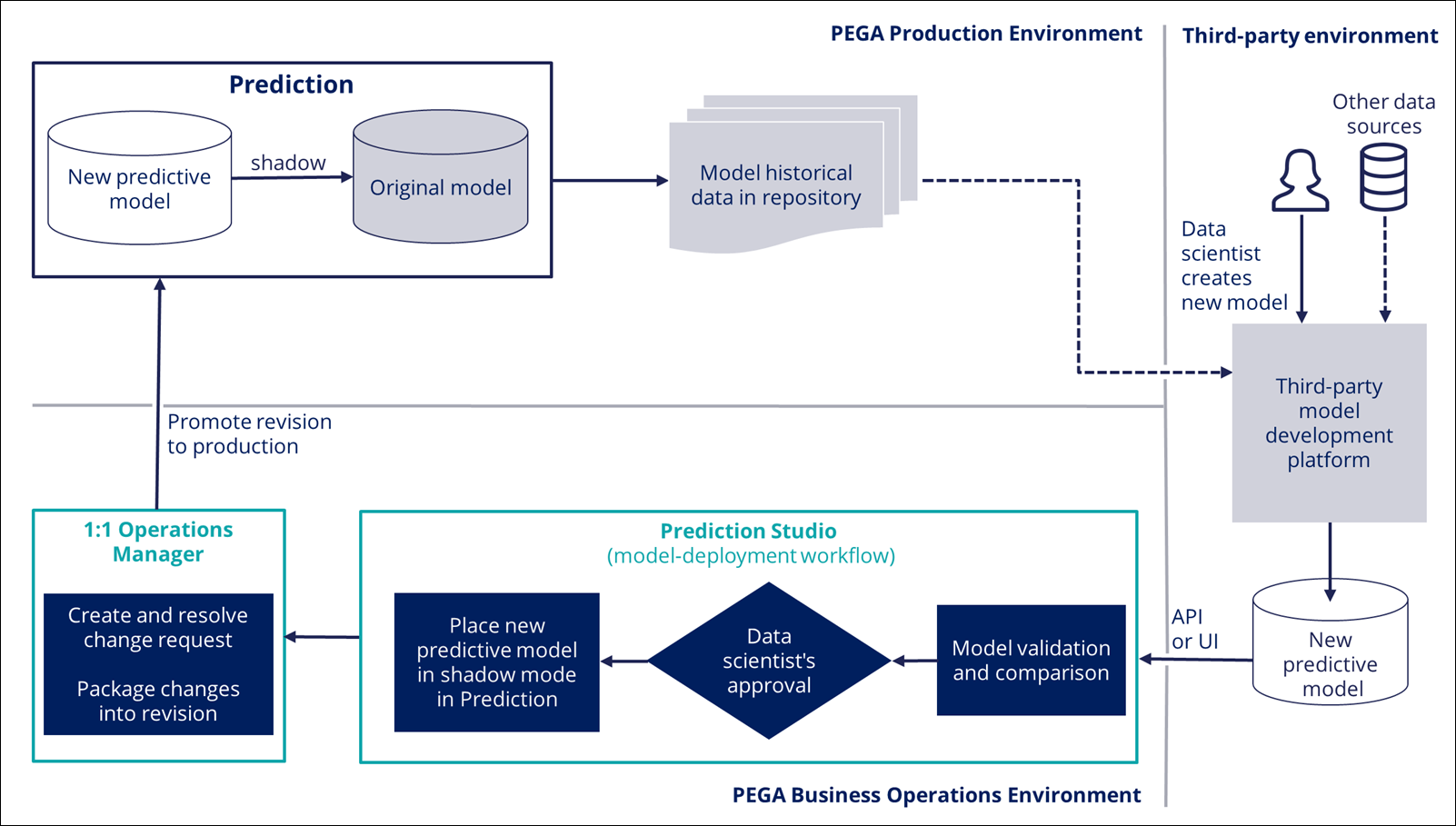

The workflow for updating models by using Machine Learning Operations (MLOps) is a standardized process that data scientists can use to replace active models in predictions with other models, scorecards, or fields that represent scores, and to deploy the new models to the production environment.

Model update workflow

A model update process consists of the following stages:

- The process starts when you update a prediction in your non-production

environment by replacing an active model with a predictive model, scorecard, or

field in the data model that contains a score. You can select a model from

Prediction Studio, upload a PMML and H2O model to

Prediction Studio, or connect to an external model that

you developed on Amazon SageMaker or Google AI Platform.

When creating a machine learning model in a third-party environment, you can use historical data from Pega Platform and other sources to train the model. For more information, see Replacing models in predictions with MLOps or Updating active models in predictions through API with MLOps.

- You can validate the candidate model against a data set, and compare the new model with the current model. This analysis provides relevant metrics to help you decide which model has better performance with a static data set.

- After you evaluate the models, you can approve or reject the candidate model for deployment to production. You can place an approved model in shadow mode (supported for predictive models) or replace the current model with the new model. For more information, see Evaluating candidate models with MLOps or Updating active models in predictions through API with MLOps.

- If you approve a model, the decision strategy that uses the updated prediction is changed, and the strategy as well as the candidate model are added to a new branch.

- Depending on how your environment is configured, deployment involves different

stages and components:

- In a Pega Customer Decision Hub environment, the system creates and resolves a change request in Pega 1:1 Operations Manager. A team lead can verify the changes in the rules and the relevant documentation. The change request is packaged into a revision, and a deployment manager can promote the prediction with the candidate model to production. For more information, see Understanding the change request flow.

- In other environments, in which predictions are used for other purposes, such as customer service and intelligent automation, components such as Pega 1:1 Operations Manager and Revision Manager are not present. A system architect merges the branch with the model update to the application ruleset. A deployment manager can then deploy the application ruleset to production.

- If you deploy the candidate model to production in shadow mode, it runs

alongside the original model, receives production data, and generates outcomes,

but the outcomes do not impact business decisions.

If the candidate model performs well in production, you can promote it to the active model position. For more information, see Promoting shadow models with MLOps or Updating active models in predictions through API with MLOps.

The model is deployed through the business change pipeline by using Pega 1:1 Operations Manager and Revision Manager. For more information, see the Pega 1:1 Operations Manager User Guide on the Pega Customer Decision Hub product page.

- If the model proves ineffective, you can reject it, and add another model to the prediction. For more information, see Rejecting shadow models with MLOps or Updating active models in predictions through API with MLOps.

The following diagram shows an example of the model update process. In this example, a data scientist approves a predictive model for deployment to production as a shadow of the original model.

Previous topic Accessing and testing V2 Prediction API Next topic Active and candidate model type combinations